As revelations about the harmful toll of social media on children and teens have become public over the past few years, Congress sought to amp up the pressure on Big Tech and pass legislation for the first time in decades to protect minors and hold companies accountable.

Some of those efforts “came heartbreakingly close” to materializing at the end of the year but ultimately faded and got punted to the new session of Congress that started in January.

One of those bills, co-authored by Sen. Richard Blumenthal, D-Conn., focuses on the safety aspect and gives children and parents greater control over what online content can be viewed.

The issue came to a head when Facebook whistleblower Frances Haugen testified before Congress in 2021 about the harmful effects of social media on children and teenagers and how tech giants kept users engaged to turn profits. Lawmakers like Blumenthal believe the growing bipartisan support on this issue could lead to the passage of tech reforms this time around — possibly this year.

Blumenthal is renewing his push for the Kids Online Safety Act with co-author Sen. Marsha Blackburn, R-Tenn. Over the years, Blumenthal has recounted stories he has heard from parents in Connecticut whose children viewed “extreme” videos on the social media platform TikTok about weight loss, disordered eating and self-harm practices.

Online protections and privacy appear to be a rare issue of bipartisan agreement in Congress, especially in a divided government where there will likely be little compromise with a GOP-led House and Democratic-controlled Senate. But like many efforts on Capitol Hill, it can take a while to navigate the legislative process.

When the lawmakers reintroduce the bill, Blumenthal hopes Congress can quickly act on it, since the Senate is mostly focused on confirming judicial nominations.

“We should do it quickly, because we have the time right now. We have judges, which is important to do, but we don’t have legislation right now to put on the floor, and I’m absolutely convinced that this legislation will pass overwhelmingly,” Blumenthal said in an interview.

“Kids deserve protection. Parents need tools. Both parents and kids have to be given control back over their lives,” he added. “My basic belief is there’s no real partisan divide on consumer protection.”

Tech companies largely avoided major reforms when Congress negotiated its end-of-the-year package to fund the federal government through September. Other than a measure to ban TikTok from government devices, lawmakers were unsuccessful in a last-minute lobbying effort to get into the spending bill both the Kids Online Safety Act and a bill that would update an existing law to extend privacy to users between ages 13 and 16.

Tim Wu, a former White House official who worked on tech antitrust matters, blamed Senate GOP leadership. But Democrats ultimately did not include it in the “omnibus” spending bill.

“We had a last chance in the omnibus. We put on the table children’s privacy, children’s protection and tech antitrust as well,” Wu said in a January podcast episode of “On With Kara Swisher.” “But that combination of money, the Republicans actually being pro-tech as opposed to anti- or at least pro-business or not willing to give the Democrats what looked like a victory — played a factor.”

But even with that initial resistance, Blumenthal believes KOSA has a much better chance of passing as a standalone bill, with overwhelming support from both parties.

There has been limited action on the federal level over the past few decades in dealing with young users’ experiences online. The Children’s Online Privacy Protection Act of 1998 was one of the last major pieces of legislation that protects children under age 13. That has put pressure on states like California to pass their own bills addressing such issues.

While the legislation has garnered bipartisan support, and few dispute the merits of strengthening online protections, there have been outspoken critics who argue the bill will “over-censor” young people and adds to confusion about what is deemed appropriate content.

Despite these challenges, Blumenthal believes Congress can once again take up tech-related bills like the Kids Online Safety Act and potentially get it done as early as this year, though the timeline is very fluid.

“A bill takes a while — sometimes more than one session, sometimes many sessions — to get the gravitas and support it needs,” he said. “It was an artificial bind we confronted with the omnibus. Most legislation shouldn’t depend on that kind of vehicle, so that’s why I’m more hopeful.”

Blumenthal zeroes in on Big Tech

Blumenthal has made tech-related legislation a major part of his congressional portfolio.

First introduced in early 2022, the Kids Online Safety Act aims to put in place stricter settings on online sites used by minors by allowing children and parents to disable addictive features, enable privacy settings and opt out of algorithmic recommendations. It also requires these companies to conduct an annual independent audit to analyze the risks to minors and see if they are working to reduce it.

The bill establishes a “duty of care” for websites that are likely used by young individuals “to act in the best interests of a minor” in matters related to mental health disorders, addiction-like behaviors, physical violence, online bullying, sexual exploitation and the promotion of narcotic drugs or “predatory, unfair or deceptive marketing practices.”

As children face a growing mental health crisis, those questioning Big Tech are hoping to limit minors’ exposure to content that can lead to eating disorders, self-harm or substance abuse.

Many of these efforts have been recognized by President Joe Biden, who renewed his support for legislation that reins in tech companies during his State of the Union address. And in a Wall Street Journal op-ed from earlier this year, he wrote that he wanted “Democrats and Republicans to come together to pass strong bipartisan legislation to hold Big Tech accountable.” But he has not mentioned specific legislation by name.

“We must finally hold social media companies accountable for the experiment they are running on our children for profit,” Biden said in his February address. “And it’s time to pass bipartisan legislation to stop Big Tech from collecting personal data on kids and teenagers online, ban targeted advertising to children and impose stricter limits on the personal data these companies collect on all of us.”

In addition to KOSA, Blumenthal supports a host of other reforms regarding online privacy and competition. He has sponsored the Open App Markets App, which would prohibit companies with more than 50 million users in the U.S. from requiring app developers to use in-payment payment systems managed by that company.

And he is an original co-sponsor of several other bills that would reshape the tech landscape.

An update to the Children’s Online Privacy Protection Act, known as COPPA 2.0, would ban targeted advertising to minors and extend protections to users between ages 13 and 16. Another bill, the Clean Slate for Kids Online Act, would allow users to request the deletion of personal information collected before age 13. He also supports bills, like the EARN IT Act, to reform Section 230, which grants tech companies immunity from lawsuits over content.

Online safety back in the spotlight

Because the bill came close to passage last year, KOSA is a top priority for Blumenthal. He and Blackburn still need to reintroduce the legislation in the new session of Congress, but there has been some recent work on the broader issue of kids’ online safety.

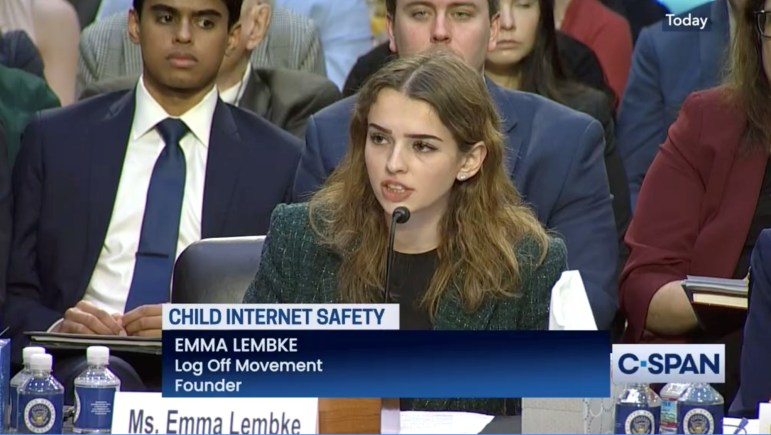

The Senate Judiciary Committee, which Blumenthal and Blackburn both sit on, convened a recent hearing during which online safety advocates spoke about the dangers on the internet. While tech executives have previously testified before Congress, none was at the February hearing.

Emma Lembke, who has had a social media account since age 12, told lawmakers the hours of content she consumed a day on Instagram led to disordered eating. She now runs a group seeking to get minors to spend less time online.

“Senators, my story does not exist in isolation — it is a story representative of my generation, Generation Z,” said Lembke, founder and executive director of LOG OFF Movement.

“As a young woman, being exposed to unrealistic body standards and harmful recommended content severely damaged my sense of self and led me towards disordered eating,” she added. “I became the living embodiment of Facebook’s own 2019 internal research finding that their platforms made body image issues worse for one in three teen girls.”

Another witness, Kristin Bride, is a social media reform advocate who spoke about her 16-year-old son, Carson, who died by suicide in 2020 following cyberbullying on Snapchat and anonymous messaging apps.

Some Republicans floated the idea of keeping those under 16 off social media altogether. But Blumenthal pushed back against such an idea, noting the positives of social media and the difficulty of enforcing such an age requirement. But overall, Democrats and Republicans on the committee largely seemed on the same page about doing something to hold tech companies accountable.

Because of the slim majorities in both chambers, any of these bills will need support from at least nine Republican senators and about half a dozen GOP members in the House to make it to Biden’s desk.

“There’s just no Republican and Democratic algorithm. They drive content. They’re politically blind,” Blumenthal said. “It’s just more eyeballs, more advertising, more addictive content driven at kids for the purpose of enhancing profits, and that’s kind of above political partisanship.”

The bill will ultimately come before the Senate Committee on Commerce, Science and Transportation, though the timeline for legislative action is unclear. KOSA unanimously cleared the committee last year but will need another markup and committee vote in the new session of Congress before it could move toward final passage.

Senate Commerce Committee Chairwoman Maria Cantwell, D-Wash., was optimistic that her panel and Congress will be able to act on KOSA but acknowledged the challenges that lie ahead. Some of the biggest tech companies, like Twitter and Google’s parent company Alphabet, have deployed lobbyists to Congress about bills like KOSA and COPPA 2.0, though some have started to implement more privacy settings to address concerns around young users.

“There are a lot of people who said they were willing to work with us last year, and they ended up just blocking the bill, so we need to figure out how we can get around some of those big players who aren’t really serious about helping,” Cantwell said in a recent interview. “I’m optimistic that we can get something done.”

“I don’t know who in reality disagrees with that,” Cantwell said. “I’m not 100% sure where they’ll be, but we’ve had a lot of Republican members who have shown interest.”

Some lawmakers, however, want another bill prioritized that would have much broader actions that extend beyond to all Americans.

Critics sound alarm about content moderation

Other concerns run much deeper about the online safety legislation.

Dozens of organizations wrote a letter last November to Senate Democratic leadership about keeping the bill out of the year-end spending bill. Even after it was amended, seven civil liberties and LGBTQ rights groups still expressed concerns a month later.

Organizations like the American Civil Liberties Union and the Gay, Lesbian and Straight Education Network commended the overall goals but warned about “significant parental surveillance” of vulnerable kids and teens. They believe it could have “unintended consequences” when it comes to content filtering and limited access to information for vulnerable children in abusive situations and LGBTQ youth.

“The new language in KOSA would still enable state Attorneys General to bring enforcement actions against online services under a vague ‘duty of care’ standard,” the December letter reads. “Several state AGs are already engaged in campaigns against trans youth and against social media content moderation practices; giving them a tool that allows them to pursue both aims at once is irresponsible and deeply threatening to the lives and rights of LGBTQ youth.”

To quell concerns, Blumenthal and Blackburn made updates to the bill. The new text clarified the “duty of care” section, stipulating that companies “shall act in the best interests” of known minors using their site and that they need to “take reasonable measures in its design and operation of products and services to prevent and mitigate” harm.

But online advocates like Jason Kelley, associate director of digital strategy at Electronic Frontier Foundation, still take issue with that provision because it “ties platform liability to content recommendations,” and it is difficult to manage such content when there is little agreement on what is appropriate for minors.

Kelley said companies’ own tech will have trouble detecting and understanding such harm. As part of another job, he went through software used by schools to block certain content from students. He noted much of it was “completely benign.”

He also argues that the bill leaves a lot of discretion to state attorneys general who could pressure companies to “over-moderate,” especially with the politicization of certain topics.

“It’s understandable that there is this push to limit extreme content that young people see,” Kelley said. But “is there agreement on what type of content actually causes these harms? I don’t think that there is.”

“The bill would just create massive censorship across the board for everyone. The biggest targets are by any attorney general,” he added.

Kelley’s group believes that legislation addressing competition online, like the ones also supported by Blumenthal, will have the greatest impact. Kelley argued that the lack of competition of social media platforms causes the most harm to all online users.

But as efforts to pass KOSA ramp up again, Blumenthal said conversations with these groups are “ongoing, intensive, productive.”

“I think we have common interests and goals, and we’ve made changes to accommodate their questions and concerns, simply to make sure the bill is clear in its provisions to prevent any abuse over use,” he said. “And I don’t want to mislead anyone — we haven’t altered the fundamental, substantive thrust of the bill.”

The Connecticut Mirror/Connecticut Public Radio federal policy reporter position is made possible, in part, by funding from the Robert and Margaret Patricelli Family Foundation.