When State Commissioner of Education Stephen Pryor unveiled Connecticut’s “School Performance Index” (SPI) website earlier this year, the initial story grabbed front-page headlines, but the substance was underwhelming.

Both the Connecticut Mirror’s Jacqueline Rabe Thomas and the Hartford Courant’s Kathy Megan reported that the SPI site revealed few surprises. If the Commissioner wants to engage the public more effectively in education reform, then one simple step — among other, more substantive ones — is to create better information tools that offer more meaningful comparisons of Connecticut’s public schools, drawing upon lessons learned from the SmartChoices site developed at Trinity College.

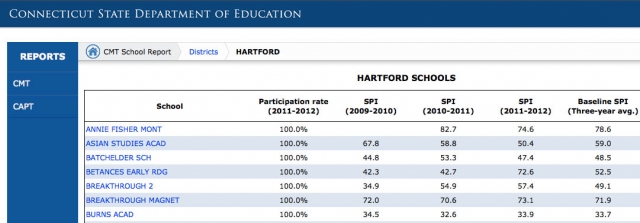

In simple terms, the SPI site averages three years of Connecticut standardized test scores into one composite index for each school. While the underlying test data has been publicly available on the CTReports.com site for several years, it’s now compressed into one number. An elementary school with an SPI of 67 means that students’ average scores across all Connecticut Mastery Tests was “proficient,” or below the “goal” SPI of 100. Not surprisingly, most of Hartford’s district elementary schools scored in 30s to the 50s, wealthier suburban districts in the 80s and 90s, and inter-district magnets and charters usually in between. This news builds on press releases from suburban districts trumpeting their high SPI scores, or in other words, tells us old news that we already know.

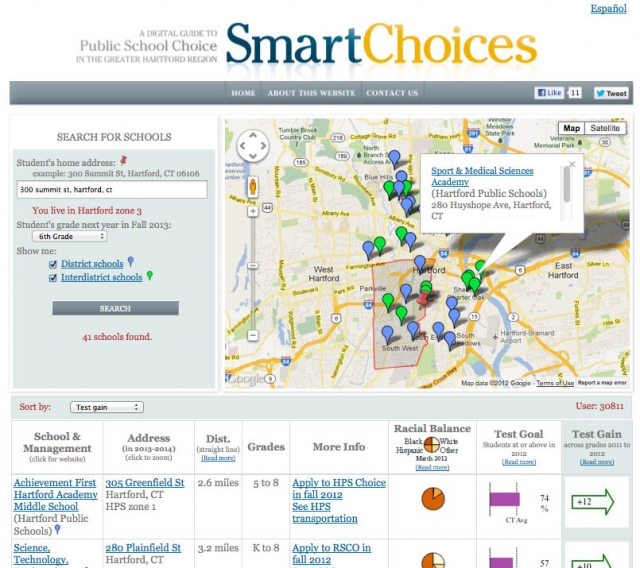

Over the past five years, my Trinity College students, colleagues, and community partners and I have learned some lessons about school information visualization on the web by designing SmartChoices, an interactive map and data search tool for public school options in metropolitan Hartford.

We initially created the site to help urban and suburban parents navigate the rapidly growing number of school choices from competing providers. For instance, the parent of a typical Hartford 6th grader can apply to lotteries for 41 different public schools and programs.

That’s an overwhelming amount of information, and SmartChoices is currently the only tool that displays all of the eligible options on a parent-friendly screen. (Full disclosure: This year SmartChoices received a $26,537 grant from Achieve Hartford, which funded my one-course reduced teaching load and technology support from Academic Computing at Trinity.) While Connecticut’s SPI site and SmartChoices have different objectives, some of our lessons have crossover potential.

Design parent-friendly tools to show school-to-school comparisons.

Connecticut’s SPI site displays school data tables, organized by district. That’s great for district administrators (who already know this data), but lousy for parents trying to evaluate different public school options.

Imagine a Hartford parent who is looking for side-by-side comparisons of district schools, inter-district magnets, and charter schools, operated by different systems (Hartford Public Schools, CREC, and others). You’d be frustrated by the SPI site, because it wasn’t created with your needs in mind.

By contrast, we designed SmartChoices to display all of a student’s public school options (based on eligibility by home address and grade level) on one screen. Rows of school data are not fixed in alphabetical order, but may be sorted by column. Furthermore, we displayed numerical data in easy-to-read charts and arrows, added Spanish translation, and incorporated a map to help parents visualize where schools are located.

Avoid reducing school quality to one data point by providing a set of indicators. What’s a “good” school? There’s no one-size-fits-all answer to that question, because we value so many different qualities about our public schools, including many that cannot be captured on a spreadsheet.

But the state can do a better job of sharing some of the valuable data it already collects about our schools, but rarely shows in parent-friendly ways.

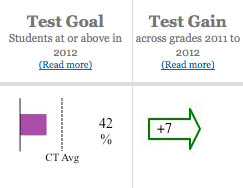

We designed SmartChoices to display publicly available data that matters to most families: home-to-school distance, racial balance, and two different test score indicators to draw a key distinction. “Test goal” measures the percent of students who score at or above goal level (compared to the state average), while “test gain” shows the percentage point increase (or decrease) by grade-level cohorts over one year (this year’s 4th graders compared to last year’s 3rd graders, etc.) Which school is better: one with high percentages of students scoring at goal (which could be due to family background) or one where growing percentages of students are reaching goal over time? Seeing both measures, in combination with other quality factors, is more meaningful than a single index score.

To be clear, the SmartChoices “test gain” measure is not perfect (it fails to account for student mobility in and out of schools), but it’s the best approximation we can calculate from publicly available school-level data.

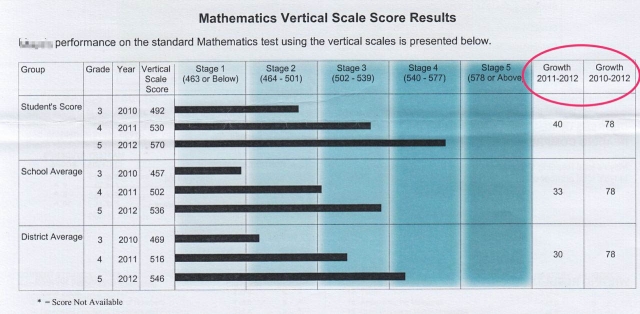

Interestingly, the Connecticut Department of Education collects better-quality “test growth” data. Every fall, as the parent of a child who took the Connecticut Mastery Test the prior spring, I receive an individualized letter that shows my student’s annual test score growth (from 3rd grade to 4th grade, etc.) in comparison to the school and district. But to my knowledge, aggregated “test growth” data for entire schools or districts is not currently available on any public website.

If Connecticut decided to share “test growth” data, it would push the state (for better or worse) into national debates over value-added assessment. But telling us more about student growth would be a vast improvement over the limited information provided by the new school performance index.

By itself, a website will not drive meaningful education reform. It’s merely an information tool, with the potential to empower some people (particularly those with digital literacy and access) to make more informed decisions, which in turn, may benefit their individual interests, but not necessarily the public good. A website will not solve our public policy dilemmas. But if the Connecticut Department of Education is devoting resources to building school data information tools, let’s make sure that we’re doing the best job possible in designing it for the people for whom these reforms are intended to help.

————————————

SmartChoices was developed by the Cities Suburbs & Schools Project, with Jean-Pierre Haeberly and David Tatem in Academic Computing at Trinity, and the open-source code is freely available upon email request to the author.