Facebook’s long-awaited change in how it handles political advertisements is only a first step toward addressing a problem intrinsic to a social network built on the viral sharing of user posts.

The company’s approach, a searchable database of political ads and their sponsors, depends on the company’s ability to sort through huge quantities of ads and identify which ones are political. Facebook is betting that a combination of voluntary disclosure and review by both people and automated systems will close a vulnerability that was famously exploited by Russian meddlers in the 2016 election.

The company is doubling down on tactics that so far have not prevented the proliferation of hate-filled posts or ads that use Facebook’s capability to target ads particular groups.

If the policy works as Facebook hopes, users will learn who has paid for the ads they see. But the company is not revealing details about the significant aspect of how political advertisers use its platform — the specific attributes the ad buyers used to target a particular person for an ad.

Facebook’s new system is the company’s most ambitious response thus far to the now-documented efforts by Russian agents to circulate items that would boost Donald Trump’s chances or suppress Democratic turnout. The new policies announced Thursday will make it harder for somebody trying to exploit the precise vulnerabilities in Facebook’s system exploited by the Russians in 2016 in several ways:

First, political ads that you see on Facebook will now include the name of the organization or person who paid for it, reminiscent of disclaimers required on political mailers and TV ads. (The ads Facebook identified as placed by Russians carried no such tags.)

The Federal Election Commission requires political ads to carry such clear disclosures but as we have reported, many candidates and groups on Facebook haven’t been following that rule.

Second, all political ads will be published in a searchable database.

Finally, the company will now require that anyone buying a political ad in their system confirm that they’re a U.S. resident. Facebook will even mail advertisers a postcard to make certain they’re in the U.S. Facebook says ads by advertisers whose identities aren’t verified under this process will be taken down starting in about a week, and they will be blocked from buying new ads until they have verified themselves.

While the new system can still be gamed, the specific tactics used by the Russian Internet Research Agency, such as an overseas purchase of ads promoting a Black Lives Matter rally under the name “Blacktivist,” will become harder — or at least harder to do without getting caught.

The company has also pledged to devote more employees to the issue, including 3,000-4,000 more content moderators. But Facebook says these will be not be additional hires — they will be included in the 20,000 already promised to tackle various moderation issues in the coming months.

What is Facebook missing?

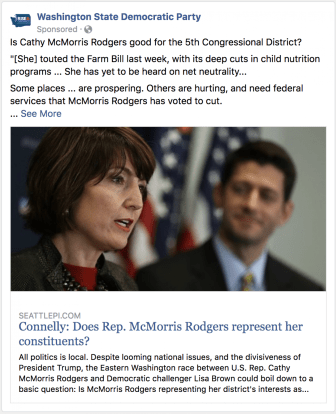

The most obvious flaw in Facebook’s new system is that it misses ads it should catch. Right now, it’s easy to find political ads that are missing from their archive. Take this one, from the Washington State Democratic Party. Just minutes after Facebook finished announcing its launch of the tool, a participant in ProPublica’s Facebook Political Ad Collector project saw this ad, criticizing Republican congresswoman Cathy McMorris Rodgers… but it wasn’t in the database.

And there are others.

The company acknowledged that the process is still a work in progress, reiterating its request that users pitch in by reporting the political ads that lack disclosures.

Even as Facebook’s system gets better at identifying political ads, the company is withholding a critical piece of information in the ads it’s publishing. While we’ll see some demographic information about who saw a given ad, Facebook is not indicating which audiences the advertiser intended to target — categories that often include racial or political characteristics and which have been controversial in the past.

This information is critical to researchers and journalists trying to make sense of political advertising on Facebook. Take, for instance, this ad promoting the environmental benefits of nuclear power, from a group called Nuclear Matters: the group chose specifically to show it to people interested in veganism — a fact we wouldn’t know from looking at the demographics of the users who saw the ad.

Facebook said it considers the information about who saw an ad — age, gender and location — sufficient. Rob Leathern, Facebook’s Director of Product Management, said that the limited demographics-only breakdown “offers more transparency than the intent, in terms of showing the targeting.”

The company is also promising to launch an API, a technical tool which will allow outsiders to write software that would look for patterns in the new ad database. The company says it will launch an API “later this summer” but hasn’t said what data it will contain or who will have access to it.

ProPublica’s own Facebook Ad Collector tool, which also collects political ads spotted on Facebook, has an API that can be accessed by anyone. It also includes the targeting information — which users can also see on each ad that they view.

Facebook said it would not release data about ads flagged by users as political and then rejected by the system. We’re curious about those, and we know firsthand that their software can be imperfect. We’ve attempted to buy ads specifically about our journalism that were flagged as problematic — because the ads “contained profanity,” or were misclassified as discriminatory ads for “employment, credit or housing opportunities” by mistake.

Facebook’s track record on initiatives aimed at improving the transparency of its massively profitable advertising system is spotty. The company has said it’s going to rely in part on artificial intelligence to review ads — the same sort of technology that the company said in the past it would use to block discriminatory ads for housing, employment and credit opportunities.

When we tested the system almost a year after a ProPublica story showed Facebook was allowing advertisers to target housing ads in a way that violated Fair Housing Act protections, we found that the company was still approving housing ads that excluded African-Americans and other “multicultural affinities” from seeing them. The company was pressured to implement several changes to its ad portal and a Fair Housing group filed a lawsuit against the company.

Facebook also plans to rely in part on users to find and report political ads that get through the system without the required disclosures.

But its track record of moderating user-flagged content — when it comes to both hate speech and advertising — has been uneven. Last December, ProPublica brought 49 cases of user-flagged offensive speech to Facebook, and the company acknowledged that its moderators had made the wrong call in 22 of them.

The company admits it’s playing a “cat and mouse game” with people trying to pass political ads through their system unnoticed. Just last month, Ohio Democratic gubernatorial candidate Richard Cordray’s campaign ran Facebook ads criticizing his opponent — but from a page called “Ohio Primary Info.”

The need for ad transparency goes way beyond Russian bad actors. Our tool has already caught scams and malware disguised as politics, which users raised as a problem years before Facebook made any meaningful change.

If you flag an ad to Facebook, please report them to us as well by sending an email to political.ads@propublica.org. We will be watching to see how well Facebook responds when users flag an ad.

How will they enforce the new rules?

It’s one thing to create a set of rules, and another to enforce them consistently and on a large scale.

Facebook, which kept its content moderation and hate speech policies secret until they were revealed by ProPublica, won’t share the specific rules governing political ad content or details about the instructions moderators receive.

Leathern said the company is keeping the rules secret to frustrate the efforts of “bad actors who try to game our enforcement systems”

Facebook has said it’s looking to flag both electoral ads and those that take a position on its list of 20 “national legislative issues of public importance”. These range from the concrete, like “abortion” and “taxes,” to broad topics like “health” and “values.”

Facebook acknowledges its system will make mistakes and says it will improve over time. Ads for specific candidates are relatively easy to detect. “We’ll likely miss ads when they aim to persuade,” said Katie Harbath, Facebook’s Global Politics and Government Outreach Director.

We plan to keep an eye out for ads that don’t make it into the archive. We’ll be looking for ads that our Political Ad Collector tool finds that aren’t in Facebook’s database.

Want to help?

We need your help building out our independent database of political ads! If you’re still reading this article, we’re giving you permission to stop and install the Political Ad Collector extension. Here’s what you need to know about how it works.

You can also help us find other people who can install the tool. We are especially in need of people who aren’t ProPublica readers already. We need people from a diverse set of backgrounds, and with different perspectives and political beliefs. Please encourage your friends and relatives — especially the ones you avoid talking politics with — to install it.

This story was first published May 24, 2018, by ProPublica, a Pulitzer Prize-winning investigative newsroom. Sign up for their newsletter.